|

Intel VT‐x or AMD‐V – Uses hardware extensions to run and isolate guest Intel VT‐x/EPT or AMD‐V/RVI – Uses hardware extensions to run and AMD和英特尔未来支持嵌入页表(NPT)的处理器。AMD的版本是Rapid Virtualization Indexing(RVI),英特尔的是Extended Page Tables(EPT)。这种新CPU技术能帮助降低虚拟化大型应用(如数据库)的性能开销。 http://en.wikipedia.org/wiki/Intel_VT#Intel_Virtualization_Technology_for_x86_.28Intel_VT-x.29 EPT: Extended Page Table (EPT). When this feature is active, the ordinary IA-32 page Hardware Virtualization: the Nuts and Bolts

Introduction First dual-core in 2005, then quad-core in 2007: the multi-core snowball is rolling. The desktop market is still trying to find out how to wield all this power; meanwhile, the server market is eagerly awaiting the octal-cores in 2009. The difference is that While a lot has been written about the opportunities that virtualization brings (consolidation, hosting legacy applications, resource balancing, faster provisioning...), most publications about virtualization are rather vague about the "nuts and bolts". Performance? Isn't that a non-issue? Modern virtualization solutions surely do not lose more than a few percent in performance, right? We'll show you that the answer is quite a bit different from what some of the sponsored white papers want you to believe. In this first article we discuss "hardware virtualization", i.e. the technology that makes it possible to offer several virtualized server such as VMware's ESX, Xen, and Windows 2008's Hyper-V. We recently provided an introduction Hardware or Machine Virtualization versus "Everyday" Virtualization Every one of us has already used virtualization in some degree. In fact, most of us wouldn't be very productive without the virtualization that a modern OS offers us. A "natively running" server or workstation with a modern OS already virtualizes quite a So why do we install a hypervisor (or VMM) to make fully virtualized servers possible if we already have some degree of virtualization in our modern operating systems? Operating systems isolate the applications weakly by giving each process a well-defined A Matter of Privileges To create several virtual servers on one physical machine, a new software layer is necessary: the hypervisor, also called Virtual Machine Monitor (VMM). The most important role is to arbitrate the access to the underlying hardware, so that To understand how the VMM actually works, we first have to understand how a modern operating systems works. Most modern operating system work with two modes:

The whole user/kernel mode arrangement is based on the fact that RAM is divided into pages. (It is also possible to work with segment registers and tables, but that is a discussion for another article.) Before a privileged instruction is executed, the CPU To illustrate this, this 2-bit code is graphically represented in many publications by four "onion rings" (as you can see in this

Ring deprivileging with software virtualization: the guest OSes are no longer running in ring 0, but with less rights in ring 1.

A technique that all (software based) virtualization solutions use is thus ring deprivileging: the operating system that runs originally on ring 0 is moved to another less privileged ring like ring 1. This allows the VMM to control the guest OS access to Virtualization Challenges The grandfathers of virtualization, such as the IBM S/370, used a very robust system to allow the hypervisor to control the virtual machines. Every privileged instruction by a virtual machine caused a "trap", an error, as it was trying to execute a "resource This kind of virtualization was not possible on x86 as the 32/64-bit Intel ISA does not trap every incident that should lead to VMM intervention. One example is the POPF instruction that disables and enables interrupts. The problem is that if this instruction The above is much more than a quick simplified history lesson. Keep this in mind when we discuss what Intel and AMD have been doing with VT-x and AMD-V. Binary Translation VMware didn't wait for Intel or AMD to solve the "x86 stealth instructions" problem and launched their solution at the end of the previous century (1999). To uncloak the stealthy x86 instructions, VMware used Binary translation (unfortunately, a VMware translates the binary code that the kernel of a guest OS wants to execute on the fly and stores the adapted x86 code in a Translator Cache (TC). User applications will not be touched by VMware's Binary Translator (BT) as it knows/assumes that user  User applications are not translated, but run directly. Binary Translation only happens when the guest OS kernel gets called. It is the kernel code that has go through the "x86 to slightly longer x86" code translation. You could say that the kernel of the guest OS is no longer running. The kernel code in the memory is nothing more than an input for the BT; it is the BT translated In many cases, the translated kernel code will be an exact copy. However, there are several cases where BT must make the "translated" kernel code a bit longer than the original code. If the kernel of the guest OS has to run a privileged instruction, the  Binary translation from x86 to x86 virtualized in action. (Image: VMware[2]) Binary translation is all about scanning the code that the kernel of the guest OS should execute at a certain moment in time and replacing it with something safe (virtualized) on the fly. With "safe", we mean safe for the other guest OSes and the VMM. VMware The TC is not only a Translator Cache but also a bit of a Trace Cache as it keeps track of the control flow of the program. Each time the kernel jumps to another address location, the BT cannot copy this exactly. If the original code had to jump 100 bytes It is clear that replacing code with "safer" code is a lot less costly than letting privileged instructions result in traps and then handling those traps afterwards. Nevertheless, that doesn't mean that the overhead of this kind of virtualization is always

Especially the first three are interesting. The last one is hard in an OS running in "native mode" too, so it is only normal that this doesn't get any better if you run more than one OS. System Calls Much has been written about kernels, but it remains one of the most confusing subjects. Some publications give the impression that the kernel is some kind of "overlord" process that is always watching in the background. This is wrong of course, because this A kernel is just another process that gets time slices from the multitasking CPU. The difference from other processes is that it has privileged access to CPU instructions that other processes don't have. Therefore, "normal" (user) processes will have to A system call is thus the result of a user application that requests a service of the kernel. x86 provides a very low latency way to get system calls done: SYSENTER (or SYSCALL) and SYSEXIT. A system call will give the Virtual Machine Monitor, especially

A system call is a lot more complex when it happens on a virtualized machine.

When the binary translated guest OS code is done, it will use a SYSEXIT to return to the user application. However, the guest OS is running at level one and doesn't have the necessary privileges to perform SYSEXIT, so the CPU faults to the level zero and

If you have a few of those virtualized machines running, system calls are suddenly much more than the background noise they were on a modern OS running on a native machine. I/O Virtualization I/O is a big issue for any form of virtualization. If your virtualized server lacks CPU power, you can just add more CPUs or cores (i.e. replace dual-core CPUs with quad-cores). However, the memory bandwidth, the chipset, and storage HBA are in most cases

A real 3.46 GHz Intel Xeon processors runs on an emulated BX-chipset: we are running inside a VM.

If you inspect the hardware of a virtual machine in ESX for example, you can see that modern CPUs have to work together with the good but nine years old BX chipset, and that your HBA is always an old bus logic or LSI card. This also means that the newest Memory Management An OS maintains page tables to translate the virtual memory pages into physical memory addresses. All modern x86 CPUs provide support for virtual memory in hardware. The translation from virtual to physical addresses is performed by the memory management It is clear that a guest OS running on a virtual machine cannot have access to the real page tables. Instead, the guest OS sees page tables which run on an emulated MMU. These tables give the guest OS the illusion that it can translate the virtual guest Every time the guest OS modifies its page mapping, the virtual MMU module will capture (trap) the modification and adjust the shadow page tables accordingly. As you've likely guessed, this costs a lot of CPU cycles. Depending on the virtualization technique Paravirtualization Paravirtualization is not that different from Binary Translation. BT changes "critical" or "dangerous" code into harmless code on the fly; paravirtualization does the same thing, but in the source code. Of course, changing the source code allows a bit more The hypervisor provides hypercall interfaces for critical kernel operations such as memory management, interrupt handling, and time keeping. These hypercalls will only happen when it is necessary. For example, most of the memory management is done by the

Simplified front end drivers interface to "normal" Linux backend drivers.

The best feature of the Xen implementation of virtualization is the way I/O is handled. I/O devices in the VM are just simplified interfaces that link to real native drivers in a privileged VM (called Domain 0 in Xen). This means there is no emulation involved, To resume, paravirtualization is an excellent concept, as you eliminate (binary) translation overhead completely, I/O driver overhead significantly, and system call overhead somewhat. Very frequent interrupts and system calls can still cause overhead. We'll

Software paravirtualization doesn't handle 64-bit OSes very well.

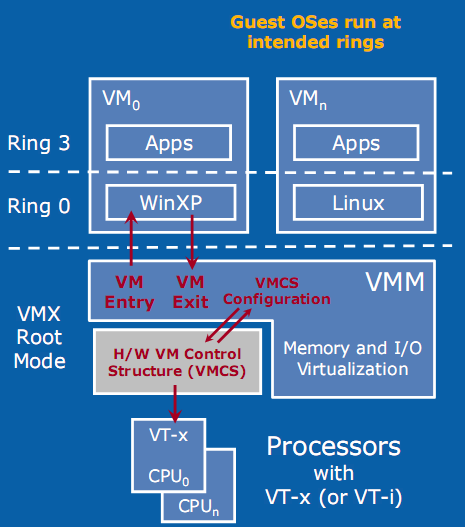

The second problem might not seem like a big problem, but in order to protect the OS, page table switching is necessary. This results in two system calls and a TLB flush, which is very costly. Let us see what Intel's VT-x and AMD-V can offer us. Hardware Accelerated Virtualization: Intel VT-x and AMD SVM Hardware virtualization should reduce all that overhead to a minimum, right? Unfortunately, that is not the case. Hardware virtualization is not an improved version of binary translation or paravirtualization. No, the first idea behind hardware virtualization One big advantage is the fact that the guest OS runs at its intended privilege level (ring 0), and that the VMM is running at a new ring with an even higher privilege level (Ring -1, or "Root mode"). System calls do not automatically result

With HW virtualization, the guest OS is back where it belongs: ring 0.

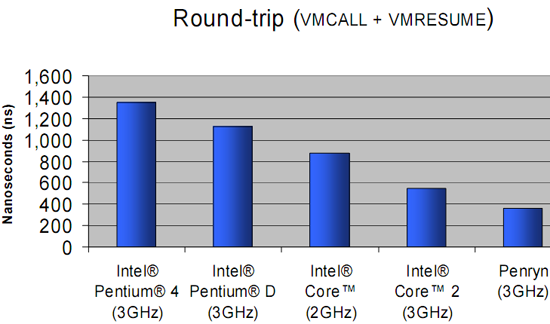

The problem is that - even though it is implemented in hardware - each transition from the VM to the VMM (VMexit) and back (VMentry) requires a fixed (and large) number of CPU cycles. The specific number of these "overhead cycles" depends on the internal The VM/VMM roundtrip of hardware virtualization is thus a rather heavy event. When Intel VT-x or AMD SVM (or AMD-V) have to handle relatively complex operations such as system calls (which take a lot of CPU cycles to handle anyway), the VMexit/VMentry switching Relatively simple operations such as creating processes, context switches, small page table updates, etc. take a few cycles when run natively, so the "switching to VMM and back" time wastes a proportionally (compared to the non-virtualized native situation)

The enter VMM and exit VMM latency has been lowered over time with the different Xeon families.

The first way Intel and AMD countered this problem is to reduce the number of cycles that the VT-x instructions take. For example, the VMentry latency was reduced from 634 (Xeon Paxville or Xeon 70xx) to 352 cycles in the Woodcrest (Xeon 51xx), Clovertown

Enter and exit VMM numbers (in ns) for the different Intel families.

The second strategy is to reduce the number of VMM events. After all, total virtualization overhead equals the numbers of events times the cost per event. In equation form: Total VT overhead = Sum of (Frequency of "VMM to VM" events * Latency of event) The Virtual Machine Control Block - a sort of table that is place in memory (in cache) and which is part of VT-x and AMD SVM - can help. It contains the state of the virtual CPU(s) for each guest OS. It allows the guest OSes to run directly without interference The second generation: Intel's EPT and AMD's NPT As we discussed in "memory management", managing the virtual memory of the different guest OS and translating this into physical pages can be extremely CPU intensive.

Without shadow pages we would have to translate virtual memory (blue) into "guest OS physical memory" (gray) and then translate the latter into the real physical memory (green). Luckily, the "shadow page table" trick avoids the double bookkeeping by making The second generation of hardware virtualization, AMD's nested paging and Intel's EPT technology partly solve this problem by brute hardware force.

EPT or Nested Page Tables is based on a "super" TLB that keeps track of both the Guest OS and the VMM memory management.

As you can see in the picture above, a CPU with hardware support for nested paging caches both the Virtual memory (Guest OS) to Physical memory (Guest OS) as the Physical Memory (Guest OS) to real physical memory transition in the TLB. The TLB has a new This makes the VMM a lot simpler and completely annihilates the need to update the shadow page tables constantly. If we consider that the Hypervisor has to intervene for each update of the shadow page tables (one per VM running), it is clear that nested There is only one downside: nested paging or EPT makes the virtual to real physical address translation a lot more complex if the TLB does not have the right entry. For each step we take in the blue area, we need to do all the steps in the orange area. Thus, In order to compensate, a CPU needs much larger TLBs than before, and TLB misses are now extremely costly. If a TLB miss happens in a native (non-virtualized) situation, we have to do four searches in the main memory. A TLB miss then results in a performance |

2009-11-26 09:28